NVIDIA NemoClaw: Enterprise Security Comes to OpenClaw

NemoClaw: NVIDIA Brings Enterprise Security to OpenClaw

On March 16, 2026, NVIDIA CEO Jensen Huang announced NemoClaw at GTC 2026: an open-source security and privacy stack purpose-built for OpenClaw. It's the first major enterprise-focused extension to the OpenClaw ecosystem, and it directly addresses the biggest concern organizations have when deploying AI assistants: keeping sensitive data under control.

NemoClaw is available today on GitHub under an Apache 2.0 license.

The NVIDIA AI Ecosystem

To understand NemoClaw's significance, it helps to see where it fits in NVIDIA's broader AI software stack.

NeMo Framework is NVIDIA's end-to-end platform for training, fine-tuning, and deploying large language models. NemoClaw builds directly on two NeMo components:

- NeMo Guardrails is the programmable safety layer that lets developers define conversational boundaries, topic restrictions, and output validation. NemoClaw extends Guardrails with OpenClaw-specific policies for tool execution and file access.

- TensorRT-LLM is NVIDIA's inference optimization engine that delivers 2-4x throughput improvements over standard PyTorch serving. NemoClaw's local inference uses TensorRT-LLM under the hood, which is why Nemotron models run significantly faster on NVIDIA hardware than generic GGUF quantizations.

The NGC Catalog serves as the distribution channel for Nemotron models. NemoClaw pulls pre-optimized model configurations directly from NGC, including TensorRT-LLM engine files tuned for specific GPU architectures. This means nemoclaw init automatically selects the best model variant for your hardware, with no manual optimization required.

GTC 2026: The Bigger Picture

GTC 2026's overarching theme was "AI in Production": the shift from prototyping to deploying AI systems at scale with enterprise requirements for reliability, security, and governance.

NemoClaw was presented during the open-source segment of Jensen Huang's keynote, alongside several other announcements that shape the hardware and software landscape NemoClaw targets:

- DGX Spark & DGX Station are desktop-class AI systems with Grace Blackwell hardware, making local inference with larger Nemotron models practical outside the data center.

- AI Enterprise 6.0 is NVIDIA's commercial AI platform, which now includes lifecycle management, support SLAs, and compliance tooling for production deployments.

- Blackwell Ultra & Vera Rubin architecture preview showcases next-generation GPU and CPU architectures that will expand the performance envelope for local AI workloads.

NemoClaw's announcement in the open-source segment, rather than the enterprise segment, was a deliberate signal: NVIDIA is investing in the open AI assistant ecosystem, not just proprietary solutions.

What Is NemoClaw?

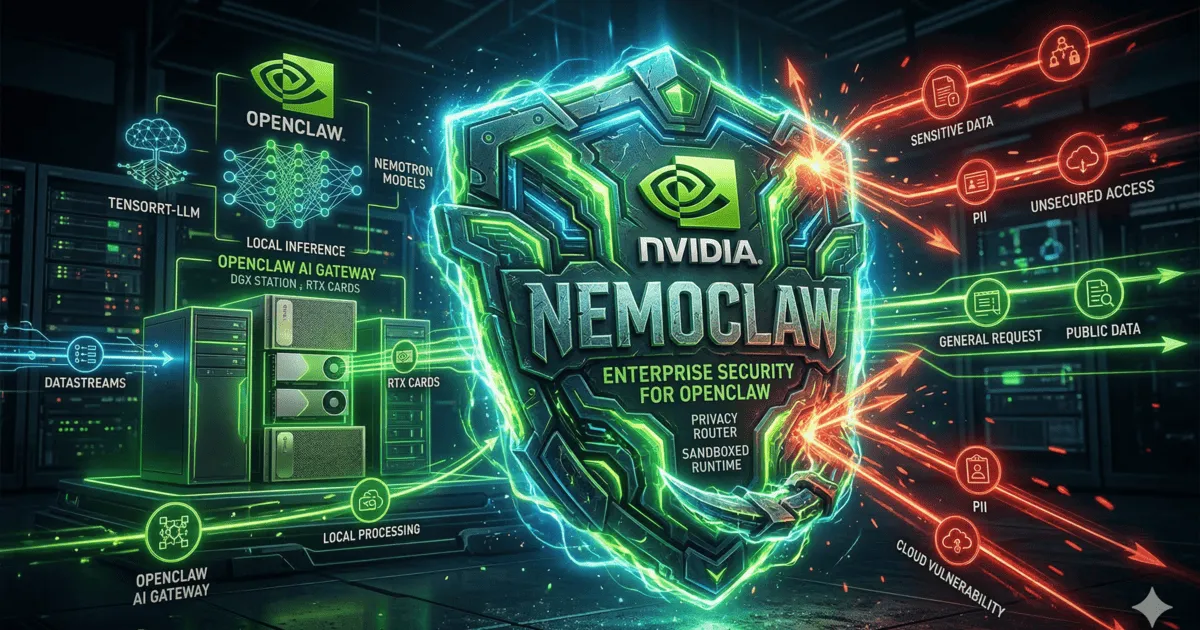

NemoClaw is a collection of open-source tools that wraps around an existing OpenClaw installation to add enterprise-grade privacy routing, sandboxed execution, and local inference capabilities. It combines NVIDIA's Nemotron language models with a hardened runtime called OpenShell to create a security layer that sits between your OpenClaw gateway and the outside world.

The entire stack installs with a single command:

nemoclaw init --config enterprise.yaml

This sets up the Privacy Router, OpenShell Runtime, local Nemotron inference (if supported hardware is detected), and the declarative policy engine. Everything is configured from one YAML blueprint.

Key Features

Privacy Router

The Privacy Router is the centerpiece of NemoClaw. It acts as an intelligent proxy between your OpenClaw gateway and cloud LLM providers, making real-time decisions about where each request should be processed:

- Hybrid routing: sensitive queries are automatically routed to local Nemotron models, while routine requests go to your configured cloud provider (Anthropic, OpenAI).

- PII stripping: personally identifiable information is detected and redacted before any data leaves your network.

- Classification engine: each message is scored for sensitivity using a lightweight local classifier, with configurable thresholds.

User Message

↓

Privacy Router (sensitivity classification)

↓ ↓

Local Nemotron Cloud LLM (PII stripped)

↓ ↓

OpenClaw Gateway (merged response)

Organizations can define their own sensitivity rules. For example, you can route all messages containing financial data, medical records, or internal project names to local inference only.

OpenShell Runtime

OpenShell replaces OpenClaw's default shell execution with a kernel-level sandboxed environment. Every tool invocation, script execution, and file operation runs inside a hardened container with:

- Namespace isolation: each execution gets its own process, network, and filesystem namespace.

- Policy-based access control: fine-grained rules define which tools can access which resources.

- Audit logging: every action is logged with full context for compliance review.

- Network filtering: outbound connections are restricted to explicitly allowed endpoints.

This means even if a skill or tool behaves unexpectedly, it cannot access files, networks, or processes outside its allowed scope.

Local Inference with Nemotron

NemoClaw ships with optimized configurations for NVIDIA's Nemotron model family, enabling fully local inference for sensitive workloads:

- Zero token costs for locally processed queries. No API calls, no usage charges.

- Air-gapped operation ensures sensitive data never leaves the local network.

- Quantized models in INT4/INT8 variants are optimized for desktop-class GPUs.

- Streaming support provides real-time token generation with the same UX as cloud models.

The Nemotron family includes four tiers, each targeting different hardware profiles:

| Model | Parameters | Target Hardware | Use Case |

|---|---|---|---|

| Nemotron-Mini | 8B | GeForce RTX 4090/5090 | Classification, short Q&A |

| Nemotron-22B | 22B | RTX PRO 6000 | General assistant tasks, summarization |

| Nemotron-49B | 49B | DGX Spark | Complex reasoning, code generation |

| Nemotron-70B | 70B | DGX Station | Full-capability local inference |

NemoClaw uses two quantization strategies to fit these models on different hardware:

- AWQ (INT4) uses Activation-aware Weight Quantization for consumer GPUs. Nemotron-Mini INT4 fits in ~6 GB VRAM while retaining strong classification accuracy.

- SmoothQuant (INT8) balances quality and speed for professional GPUs. Nemotron-22B INT8 on an RTX PRO 6000 achieves approximately 45 tokens/s, fast enough for real-time streaming responses.

The local models handle classification, summarization, and general Q&A tasks. Complex reasoning tasks can still be routed to cloud providers (with PII stripping) when needed.

Declarative Policy Engine

All security configuration lives in versioned YAML blueprints that define the complete security posture of your deployment:

# nemoclaw-policy.yaml

privacy:

pii_detection: strict

routing:

sensitive: local

default: cloud

runtime:

sandbox: openshell

network:

allow:

- "api.anthropic.com"

- "api.openai.com"

deny: ["*"]

filesystem:

read: ["/data/knowledge-base"]

write: ["/tmp/openclaw"]

audit:

level: full

retention: 90d

export: siem

Policies are version-controlled, auditable, and can be templated across multiple deployments. Changes to the policy file are validated and applied without restarting the OpenClaw gateway.

Hardware Requirements

NemoClaw's local inference features require an NVIDIA GPU. The Privacy Router and OpenShell Runtime work on any hardware, but local Nemotron models need:

| Hardware | Local Inference | Privacy Router | OpenShell |

|---|---|---|---|

| GeForce RTX 4090/5090 | Nemotron-Mini (INT4) | Yes | Yes |

| RTX PRO 6000 | Nemotron-22B (INT8) | Yes | Yes |

| DGX Spark | Nemotron-49B (FP8) | Yes | Yes |

| DGX Station | Full Nemotron-70B | Yes | Yes |

| No NVIDIA GPU | Cloud-only routing | Yes | Yes |

Without a supported GPU, NemoClaw still provides the Privacy Router (PII stripping before cloud requests) and OpenShell sandboxing, just without local inference.

Comparison with Other Solutions

NemoClaw isn't the only tool addressing AI security and privacy. Here's how it compares to other solutions in the space:

| Feature | NemoClaw | Azure AI Content Safety | NeMo Guardrails | Presidio | Custom Proxy |

|---|---|---|---|---|---|

| PII detection/redaction | Yes (local) | Yes (cloud) | No | Yes (local) | Manual |

| Hybrid routing (local/cloud) | Yes | No | No | No | Manual |

| Sandboxed execution | Yes (OpenShell) | No | No | No | Varies |

| Local inference | Yes (Nemotron) | No | No | No | Varies |

| Declarative policies | Yes (YAML) | Portal/API | Yes (Colang) | Config | Custom |

| OpenClaw integration | Native | Requires adapter | Requires adapter | Requires adapter | Custom |

| Open source | Yes (Apache 2.0) | No | Yes (Apache 2.0) | Yes (MIT) | N/A |

| Audit logging | Built-in | Built-in | Limited | No | Custom |

Each tool has its strengths. Azure AI Content Safety offers managed scalability, NeMo Guardrails provides fine-grained conversational control, and Presidio excels at standalone PII detection across multiple languages. NemoClaw's differentiator is that it combines privacy routing, sandboxing, and local inference into a single stack purpose-built for the OpenClaw ecosystem.

Why This Matters for OpenClaw Users

OpenClaw's biggest barrier to enterprise adoption has been the security question: how do you give an AI assistant access to your files, emails, and tools without risking data leaks? NemoClaw directly answers that concern.

As Jensen Huang put it during the GTC keynote:

"AI assistants need to be as secure as they are capable. NemoClaw makes OpenClaw enterprise-ready. Privacy by default, not privacy as an afterthought."

Key implications:

- Regulated industries (healthcare, finance, legal) can now deploy OpenClaw with auditable security controls.

- Enterprise IT teams get the policy-based governance they need to approve OpenClaw deployments.

- Open-source ecosystem benefits from NVIDIA's investment in hardened tooling.

- Self-hosters get a plug-and-play security layer without building custom solutions.

The community response has been significant. Within 24 hours of the announcement, the NemoClaw repository received over 2,000 GitHub stars. Discussions in the OpenClaw community are already focused on integration patterns, particularly around combining NemoClaw's Privacy Router with existing OpenClaw skill ecosystems. Several contributors have begun working on adapters for non-Nemotron models, signaling that the architecture's modular design is resonating with developers.

What's Next for NemoClaw

NemoClaw is currently in alpha, but NVIDIA has outlined a clear path forward:

- NemoClaw 1.0 (expected Q3 2026) will bring stable API surfaces, production-ready OpenShell with SELinux and AppArmor profiles, and compliance policy templates for SOC 2, HIPAA, and GDPR. Based on NVIDIA's announced roadmap, the 1.0 release is expected to mark the transition from experimental to production-supported.

- Multi-model support is expected in the 1.0 release, covering GGUF and TensorRT-LLM-compatible models beyond the Nemotron family. This aligns with NVIDIA's stated goal of making NemoClaw model-agnostic for local inference.

- NVIDIA AI Enterprise integration is a logical next step, bringing commercial support and SLAs through the AI Enterprise platform, including NGC model lifecycle management for organizations that need vendor-backed guarantees.

It's worth noting that these are forward-looking items based on NVIDIA's public communications. The open-source nature of the project means community contributions will likely shape the roadmap alongside NVIDIA's plans.

What This Means for ClawNest Users

At ClawNest, we're closely tracking NemoClaw's development. Here's how it fits into our managed hosting platform:

- Complementary, not competing. NemoClaw handles security policy and local inference; ClawNest handles uptime, backups, and deployment simplicity. The two work well together.

- Privacy Router compatibility. ClawNest instances can be configured to route through a NemoClaw Privacy Router running on your own network, giving you PII stripping before data reaches our servers.

- Enterprise plans. We're evaluating NemoClaw integration for future ClawNest enterprise tiers, including managed OpenShell sandboxing and policy templates.

If you're running OpenClaw on ClawNest today and want to layer NemoClaw on top for additional security controls, the Privacy Router can sit between your messaging channels and your ClawNest instance as a local proxy.

Getting Started with NemoClaw

NemoClaw is available now in alpha on GitHub:

- Requirements: Node.js 22+, OpenClaw 0.9+, NVIDIA GPU (optional, for local inference)

- Install:

npm install -g nemoclaw@alpha - Initialize:

nemoclaw initto generate your first policy blueprint - Connect: point NemoClaw at your existing OpenClaw gateway

Note that NemoClaw is in early alpha. Expect breaking changes and limited model support. NVIDIA has committed to a stable 1.0 release by Q3 2026.

For the full documentation and source code, visit the NemoClaw GitHub repository.

Want to run OpenClaw without the complexity of self-hosting? ClawNest provides fully managed OpenClaw instances with automatic backups, a web dashboard, and deployment in minutes. Start your free trial today.